Enterprise AI Coding Governance Service: From Codex Service Distribution to Multi-Provider Model Access, Sub-Account Governance, and Private Deployment

Posted April 12, 2026 by XAI Product Team ‐ 15 min read

What enterprises really need is not a single-vendor AI account, but a production system that can unify domestic and international AI Coding resources, distribute them continuously, govern them precisely, and run either as a managed cloud service or as a private deployment.

When enterprises come to us, the request is usually very consistent:

"We want a stable and controllable Codex service distribution model so engineers and employees can use it directly. If we also need to connect ChatGPT Pro, plus resources from Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, or Kimi Coding Plan, can all of that live inside one governance system? We also want internal systems to call AI through one unified API. If usage grows, we may also want a private deployment."

That is exactly the service shape we now offer to enterprises.

What we deliver is not an isolated account, and not a shared company login. We deliver a complete system:

- Codex service distribution so the enterprise can distribute Codex resources and several mainstream AI APIs to employees, teams, and projects

- Unified access through XAI Router for ChatGPT Pro and mainstream AI Coding plans or model capacity, including Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, and Kimi Coding Plan

- A primary/sub-account governance structure that brings model permissions, quotas, rate limits, and analytics into one control plane

- A choice between cloud-based dedicated association and distribution, or private deployment of XAI Router inside the enterprise

In one sentence: the enterprise is not buying an account, and not buying a single model endpoint. It is buying an AI productivity delivery system.

Why Enterprises Should Not Stop at "Buying a Few Accounts" or "Connecting One Model"

Buying a few AI accounts may look convenient in the short term, but once usage enters real organizational workflows, four problems appear quickly.

1. If Accounts Sit with Individuals, the Enterprise Does Not Really Control Them

Employees logging in themselves, keeping credentials locally, and configuring tools on their own may look flexible, but it leaves major management gaps:

- Offboarding becomes difficult when employees leave

- The company cannot clearly verify which tools are still tied to the same capability

- Management cannot distribute AI capabilities consistently by department, project, or role

- Once usage expands, the organization falls back into disorder: whoever gets an account first gets the capability first

2. A Working Chat UI Does Not Mean Engineering and Business Systems Are Really Connected

Many companies purchase AI services and then find that only a few employees can use them in a browser. The teams that really matter for deployment, engineering, automation, internal systems, and agent workflows, still do not have a unified access path.

What enterprises actually need is:

- direct access to Codex and similar engineering tools

- unified API access for internal systems

- centralized control over which models are allowed, how much can be used per day, and what happens when limits are reached

3. Costs Will Rise Faster Than Budget Control and Attribution

If an enterprise only buys isolated accounts, very practical questions show up quickly:

- Which departments are using AI heavily?

- Which employees are creating real output, and which are just consuming a lot?

- Which calls should stay on high-quality models, and which should move to lower-cost models?

- Which budgets should be assigned to engineering, and which to operations, support, or analytics?

Without a unified sub-account system and unified usage analytics, AI cost turns into a growing total bill that becomes harder and harder to explain.

4. Once Security and Compliance Matter, Single-Account Thinking Breaks

Especially in engineering, finance, healthcare, government, and manufacturing, business teams quickly start asking for things like:

- upstream API keys must not be spread to every employee

- all calls must be auditable

- permissions must inherit along the organizational structure

- data and control planes should stay inside the enterprise environment

At that point, "buy a few accounts" is no longer a real solution.

What This Service Actually Delivers

1. Primary Codex Service Distribution

The first layer is a stable, controllable, and operational Codex service distribution model for the enterprise.

Its value is not just "the team can use Codex." It turns Codex into a capability that can be formally distributed, formally governed, and formally expanded inside the company. The enterprise gets a standard capability path that can enter its organizational structure instead of relying on scattered personal usage patterns.

For enterprise leadership, that means:

- engineering teams and employees can obtain Codex capability through one unified entry point

- future permission distribution, account governance, and capacity expansion all start from one unified path

- the company can build its own AI Coding operating model instead of inheriting personal usage habits

More importantly, ChatGPT Pro can be governed as one high-value upstream capability in the system, but it should not be the single center of the whole offering.

2. Unified Access for Domestic and International AI Coding Plans and Model Capacity

Most enterprises do not actually need just one provider. They need a mix of upstream capacity sources for different scenarios.

For example:

- engineering teams may want Codex as the primary high-value coding capability, with ChatGPT Pro added where needed as an upstream source

- some business teams may prefer domestic cloud or domestic model services

- the company may already have purchased plans or resources from Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, or Kimi Coding Plan and wants those governed centrally

- management does not want employees to keep reconfiguring separate accounts, permissions, and reporting systems for every vendor

That is where XAI Router becomes more than a routing URL. It becomes a unified AI resource entry point.

Through XAI Router and its AI Provider components, the enterprise can connect different upstream resources into one control plane. Whether the source is upstream capacity behind Codex, ChatGPT Pro, or domestic model resources such as Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, or Kimi Coding Plan, they can all:

- be independently associated with the enterprise's dedicated primary account on the cloud-based XAI Router

- be distributed onward to employees, departments, and projects through a primary/sub-account hierarchy

- run under one consistent system for model permissions, quotas, rate limits, analytics, and audit

- later migrate smoothly to an internally private deployment of XAI Router

This means the enterprise does not need to rebuild governance every time it wants to add another model or another provider. Upstream resources can expand; downstream governance stays unified.

3. Connect Everything Through XAI Router and Build an Enterprise Sub-Account Structure

The next layer is to connect all of this through XAI Router.

This is critical, because the real enterprise requirement is not just "the primary account works." It is that the capability can be distributed, governed, and operated downward.

Inside XAI Router, the enterprise can build its own primary/sub-account structure:

- the primary account controls the global AI resource pool

- sub-accounts can be created by department, project team, role, or employee

- upper-level governance boundaries inherit downward, while lower levels can narrow scope without breaking parent constraints

- distribution is not just about credits; it can also include model permissions, rate limits, and daily limits

This is the point where AI starts to look less like an ad hoc tool and more like a governable internal resource, similar to budget, cloud capacity, or SaaS licenses.

4. Put Unified AI APIs and Multi-Upstream Capabilities Directly in the Hands of Employees

In many enterprises, the real need is not "another chat window." It is:

- engineering teams need direct Codex access

- internal tools need one unified AI API

- support, operations, and analytics systems need one model gateway

- IT needs to know who is calling what, how much it costs, and whether limits are being exceeded

That is exactly where XAI Router matters.

Through the primary/sub-account structure, the enterprise can distribute Codex resources, ChatGPT Pro-backed capability, and AI API capability directly to employees and internal systems. What users receive is an enterprise-governed access credential, not direct exposure to complex upstream credentials.

More importantly, the distributed capability does not have to come from one upstream source. Engineering can prioritize Codex, high-value scenarios can use ChatGPT Pro where appropriate, business systems can use domestic model capacity, and automation can choose model tiers based on cost and stability, all while the enterprise keeps one account system, one policy system, and one analytics system.

From an access point of view, the enterprise can unify all of the following behind one gateway:

- Codex CLI / App on native

Responses - OpenAI-compatible APIs on

/v1/chat/completions - Claude-compatible access on

/v1/messages - domestic and international model resources mapped, distributed, and governed through one enterprise entry point

- engineering scripts, automation, internal apps, and agent services all converging on the same gateway

The short version is simple: front-end tools can stay diverse, but enterprise back-end governance should have one entry point.

5. Beyond Employee Distribution: Low-Cost, High-Concurrency, Low-Latency API Traffic for Real Business Systems

Many enterprises overlook one thing after adopting AI: employee usage is only the beginning; real volume often comes from business systems.

Typical examples:

- online customer support, sales assistance, outbound QA, and knowledge-base Q&A

- content generation, summarization, tagging, moderation, and classification

- operational automation, analytics workflows, batch tasks, and agent orchestration

- apps, SaaS products, and internal enterprise platforms that need unified model access

These scenarios are different from individual employee usage. They care more about:

- low cost: different business flows can use different model tiers based on quality and budget

- high concurrency: the system must handle large parallel traffic spikes

- low latency: core workflows cannot become visibly slower just because a model is added

- stability: if one upstream provider degrades, business traffic should not fail as a whole

This is where XAI Router becomes even more valuable.

The enterprise can distribute high-value Codex capability to engineering teams and, at the same time, expose several mainstream AI APIs to business systems through one gateway for:

- multi-model routing and switching

- traffic splitting across different cost tiers

- unified authentication, quotas, rate control, and audit

- one governed access layer for high-volume production traffic

That means the enterprise is not just buying "AI for employees." It is also getting an AI API infrastructure layer that can actually enter production business flows.

6. Optional Private Deployment of XAI Router

If the enterprise needs stronger control, stricter security boundaries, internal-network deployment, or long-term data sovereignty, we can also deliver a private deployment of XAI Router.

That means the enterprise not only has a dedicated primary account and governance model, but can also deploy the control plane, routing plane, and management console to its own servers, private cloud, or dedicated network environment.

This mode is especially suitable for organizations that:

- have hard requirements on data boundaries

- want to own AI resources and governance logic for the long term

- do not want critical management operations to live in a third-party public environment

- are ready to move AI from trial usage into internal infrastructure

For those customers, private deployment is not "the more complicated version." It is the point where AI becomes part of the enterprise production system.

What This Means for Three Different Enterprise Roles

For Owners and Senior Management

You are not getting a few scattered accounts. You are getting an AI resource system that can actually be operated:

- AI capability can be distributed continuously through the organization

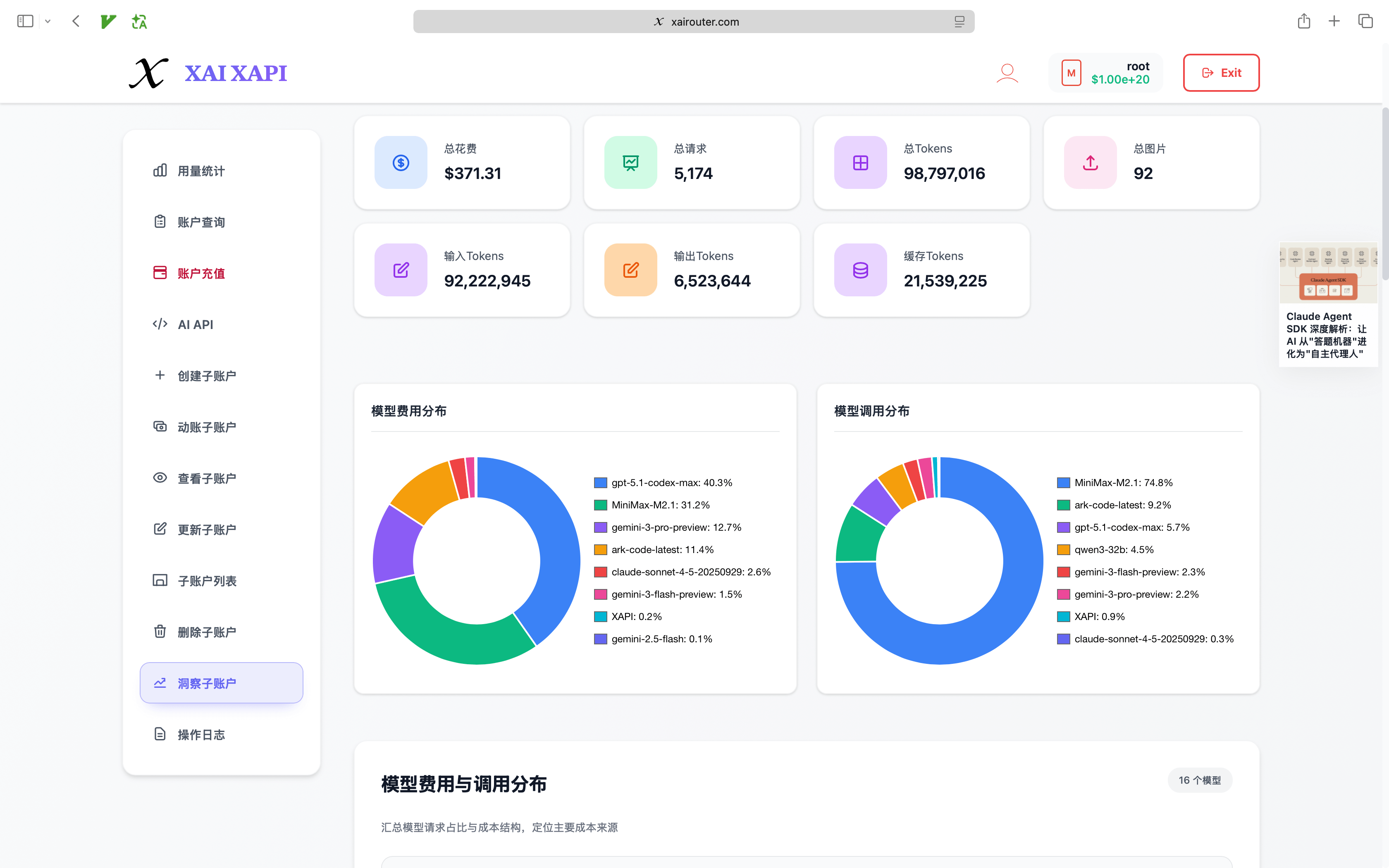

- cost can be analyzed by account, department, model, and time

- usage is no longer a black box

- the company can use domestic and international providers at the same time instead of being locked into one upstream vendor

- the same system can support both engineering

Codexusage and large-scale business-side AI API traffic - when the company decides to scale its AI investment, it does not have to rebuild everything from scratch

For IT and Digital Infrastructure Leaders

You get a unified control plane:

- a sub-account system that maps to organizational structure

- centralized governance of

allow_models, model mapping, tier strategy,daily_limit, RPD, and TPD - no need to spread upstream keys to employee endpoints

- encrypted upstream credential custody and clearer security boundaries

- no need to invent a new governance model every time the company adds Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, or Kimi Coding Plan resources

- one gateway that can handle both employee tool traffic and business-system traffic

- a continuous path from managed cloud service to private deployment

For Engineering and Business Teams

You get AI capability that fits real workflows rather than demo-only chat access:

- developers can use Codex directly

- internal tools and services can connect through unified APIs

- existing OpenAI-style and Claude-style integrations can migrate gradually

- domestic and international model resources can be assigned into the same workflow based on team needs

- business systems can choose different AI APIs based on cost, latency, and stability targets

- teams do not need every individual to maintain complicated upstream configuration by hand

This Is Not a Slide Deck Architecture. It Is an Operable Enterprise Delivery Chain

What you are seeing here is not just a conceptual diagram.

From the current product capability perspective, this enterprise chain already has the shape of a real infrastructure system:

- XAI Router provides primary/sub-account structure, inheritance, model allowlists, budget boundaries, AI resource distribution, analytics, and management

- Codex service distribution is the current primary capability line, with a stable access strategy built around native

Responsesfirst and compatibility APIs preserved - AI Provider and protocol bridge components connect different upstream resources, including ChatGPT Pro, OpenAI-compatible APIs, Claude-compatible entry paths, and domestic or international model services

- the management console already includes top-up, AI API access, sub-account list, create sub-account, inspect sub-account, update sub-account, delete sub-account, and sub-account insight workflows

In other words, when an enterprise buys this service, it is not buying a drawing. It is buying a running system that can be delivered, managed, and expanded.

A Better Enterprise Adoption Path: Start Fast, Then Gradually Pull Everything into Governance

Many enterprises do not need private deployment on day one, and they do not need every employee fully standardized on day one either.

A more realistic path usually looks like this:

- Start by delivering Codex service distribution so the core team can use the capability immediately

- Connect existing or planned resources from ChatGPT Pro, Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, or Kimi Coding Plan into XAI Router

- Build the primary/sub-account structure in XAI Router and distribute Codex resources plus unified AI APIs to more employees

- As usage grows, add model permissions, rate limits, budget control, analytics, and audit gradually

- When security, control, and internal integration requirements rise further, move to private deployment

The key advantage is simple: the enterprise can start today without creating a future rebuild problem.

Which Enterprises Fit This Service Best

This model is usually a better fit than buying isolated accounts if:

- you want to formally distribute Codex capability across the enterprise without leaving control in personal employee hands

- you already have, or plan to buy, resources from Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, or Kimi Coding Plan and want unified access and distribution

- you want tens or hundreds of employees to use Codex or unified AI APIs

- you have both employee-side AI Coding needs and business-system-side high-volume AI API traffic

- you want engineering, operations, support, and analytics teams to share one governance framework

- you need sub-account distribution, quota boundaries, model permissions, and usage analytics

- you expect to move toward private deployment or dedicated network deployment later

Conclusion

For enterprises, the real question is never "did we buy an AI account?"

The real questions are:

- can AI capability be delivered stably into the organization?

- can the resource be distributed onward to employees and systems?

- can cost, permission, and risk be controlled?

- can the whole thing keep evolving as the business scales, instead of being rebuilt from scratch?

That is exactly what this service is designed to solve.

Codex service distribution is the starting point that better matches day-to-day enterprise usage.

What matters more is that the enterprise can continue using XAI Router to connect ChatGPT Pro, Volcengine Ark, Alibaba Cloud, Baidu Qianfan, Tencent Cloud, MiniMax, Zhipu, Kimi Coding Plan, and other domestic or international AI Coding plans and model resources into one primary/sub-account system, then distribute Codex resources and several mainstream AI APIs to employees and business systems. That supports both high-value engineering coding scenarios and low-cost, high-concurrency, low-latency production business traffic. As the business matures, the enterprise can also move to private deployment of XAI Router and fully control its AI resources and governance model.

If you are looking for an AI delivery model that can land quickly today and still be governable tomorrow, this is much closer to a sustainable answer than buying scattered accounts.